Simulating the Quantum World

How Far Can Classical Computers Go?

Before we start, I’ve recently launched a crowdfunding campaign to fund our much needed workstation project. Just to recap, the kit we desperately need is a fast, robust PC, with dual monitors, one for development and the other for results, writing and documentation. We also need an uninterruptible power supply (UPS) and two large, fast data disks for professional level data analysis and daily, weekly and monthly backups. If you’d like to pitch in and help, you’ll find the link here.

And now, today’s post!

It has become something of a pattern. A quantum computing team say at Google, IBM, a university consortium, announces that their machine has solved a problem that would take a classical computer an impractical amount of time. The press covers it. The phrase ‘quantum advantage’ appears. And then, within months, sometimes weeks, a team of classical computing researchers publishes a paper showing they can match or closely approach the result on conventional hardware, given a sufficiently clever algorithm and enough cluster time.

This is not a story about quantum computing failing. It is a story about how hard it is to establish a clear frontier and about how seriously classical simulation researchers take the challenge of finding one. The race is real, it is ongoing, and it is one of the most productive tensions in contemporary computing.

To understand why the frontier matters, and why crossing it permanently is genuinely difficult, we need to understand what classical simulation can do, how it does it, and precisely where it runs out of road. That is what this post is about.

Quantum mechanics has exact analytical solutions for almost nothing. The hydrogen atom, the quantum harmonic oscillator, a handful of other toy systems, these can be solved with pencil and paper. Everything else, from the helium atom upward, requires approximation.

This is not a failure of ingenuity. It is a structural feature of the mathematics. The Schrödinger equation for a system of interacting particles couples every degree of freedom to every other. Solve for one particle and the answer depends on all the others. The equations resist separation, and separation is the key to analytical tractability.

Simulation is the response to this. Rather than finding a closed-form solution, we discretise the problem; divide space into a grid, represent the quantum state as a matrix, approximate time evolution as a sequence of finite steps and let the computer carry the calculation forward numerically. We trade exactness for reach. The solution we get is approximate, but it covers territory that analytical methods cannot touch.

At the centre of any quantum simulation is the Hamiltonian; the operator that encodes the total energy of the system. For a simulation, this becomes a matrix: rows and columns corresponding to the basis states of the system, entries encoding how those states interact and evolve.

Once we have the Hamiltonian matrix H, time evolution is formally straightforward. The state of the system at time t is given by applying the matrix exponential e^{−iHt} to the initial state. This is the quantum equivalent of saying “let the system run.” The matrix exponential propagates the wavefunction forward in time according to the physics encoded in H.

In practice, computing e^(−iHt) for a large matrix is expensive, and a range of techniques exist to make it tractable, such as Krylov subspace methods, Padé approximations, split-operator schemes.1 But the conceptual core is simple: the Hamiltonian is a matrix, time evolution is exponentiation, and simulation is repeated application.

Grid discretisation handles space. Rather than representing the wavefunction as a continuous function, we evaluate it at a finite set of points on a spatial grid. The finer the grid, the more accurate the representation, and the more memory and computation required. Derivatives become finite differences. Integrals become sums.

The continuous problem becomes a discrete one that a machine can hold. But this approach hits a fundamental wall as systems grow. The wavefunction of N particles lives in a 3N-dimensional space, so the grid grows exponentially with the number of particles. This is the curse of dimensionality, and it is the deeper reason why simulating quantum systems at scale is hard and why more sophisticated methods are needed.

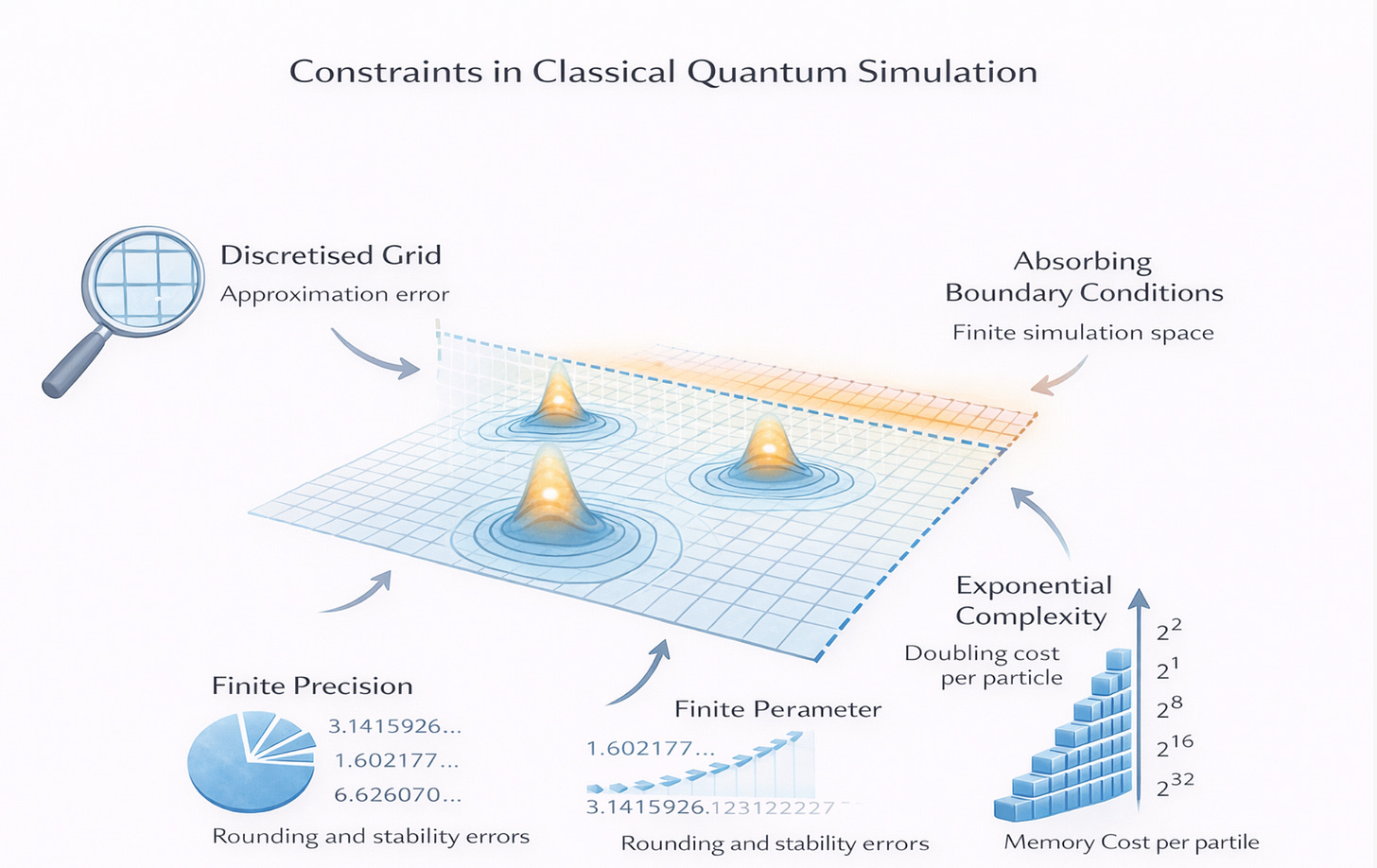

Three constraints shape every classical quantum simulation, and being honest about them is what separates reliable science from wishful benchmarking.

Boundary conditions define the edges of the simulation. A wavefunction that reaches the boundary of our grid needs to do something, such as, go to zero, wrap around periodically, or be absorbed. The choice is not neutral. Periodic boundary conditions introduce artificial long-range correlations. Hard-wall boundaries create reflections. Absorbing boundaries require additional machinery. The physics at the edges of our grid bleeds into the physics at the centre, and managing this carefully is a significant part of the craft.

Discretized space introduces approximation error at every scale finer than the grid spacing. Features of the wavefunction smaller than one grid cell are invisible to the simulation. This matters most where the wave function varies rapidly. For instance, near atomic nuclei, at potential barriers, or in regions of high kinetic energy. Grid refinement reduces the error but increases the cost, and the two scale against each other in ways that quickly become punishing.

Finite precision is the subtlest constraint. Floating point arithmetic accumulates rounding errors. Long time evolutions amplify small errors into large ones. Near-degenerate eigenvalues, i.e. energy levels that are close together, are numerically unstable. The simulation we run is not solving the equations we wrote down; it is solving a slightly perturbed version of them, and characterising that perturbation is part of the job.

The Hilbert space of a quantum system, the mathematical space of all possible states grows exponentially with the number of particles. A system of n two-level particles (spin-1/2, qubits, whatever we like) has 2ⁿ basis states. Representing its full quantum state requires a vector of 2ⁿ complex numbers. Its Hamiltonian is a 2ⁿ × 2ⁿ matrix.

For n = 10, that is 1,024 basis states is trivial. For n = 30, that is roughly one billion, just about manageable with serious hardware. For n = 50, that is roughly one quadrillion. Totally beyond any classical machine’s memory. For n = 100, the number of basis states exceeds the number of atoms in the observable universe.

This is not a hardware problem that faster processors or more RAM will eventually solve. It is exponential growth, and exponential growth wins. Every doubling of system size doubles the memory required. The wall is not far away on the horizon for strongly correlated quantum systems, it arrives at tens of particles.

Classical simulation has sophisticated tools for working around this. Tensor networks exploit the fact that most physical states don’t use the full Hilbert space, quantum Monte Carlo samples the state space statistically rather than exhaustively. But these workarounds have their own constraints, and there are classes of systems they cannot handle. The wall cannot be moved. It can only be approached more cleverly. For researchers working in R Language, a small set of packages does most of the heavy lifting in quantum simulation at tractable scales. For example:

expm handles the matrix exponential directly . expm(M) computes e^M for a given matrix M, which is the core operation in time evolution. For small to moderate Hamiltonians, this is the natural starting point.

deSolve approaches the same problem differently. Rather than exponentiating the Hamiltonian, it integrates the Schrödinger equation as an ordinary differential equation system, stepping the wavefunction forward in time numerically. This is more flexible for time-dependent Hamiltonians and allows adaptive step-size control.

pracma provides the numerical linear algebra toolkit that underlies both approaches including eigenvalue solvers, Krylov methods, numerical integration. It handles the spectral analysis that extracts energy levels and transition rates from a simulation being run.

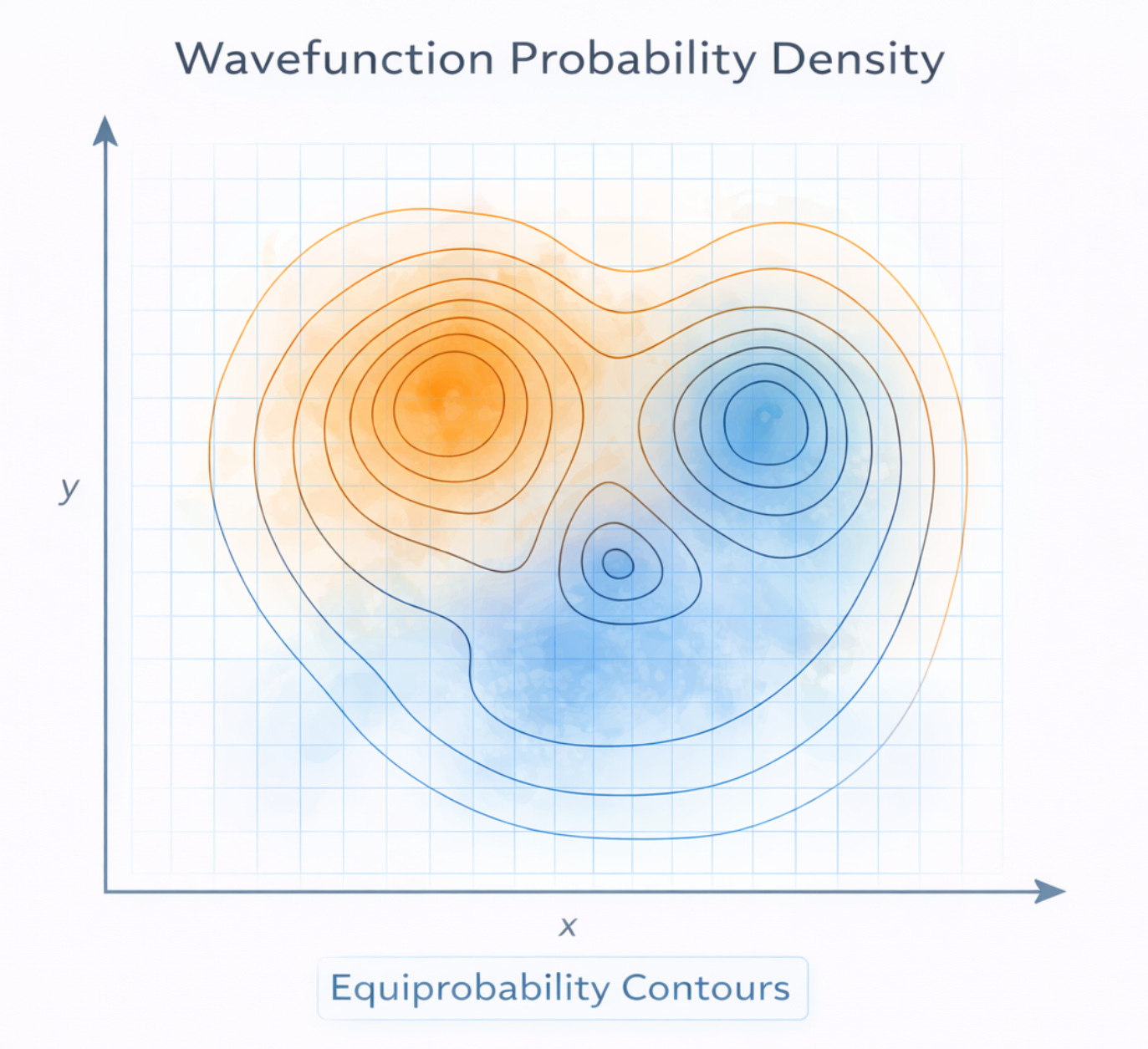

ggplot2 closes the loop between computation and understanding. The outputs of quantum simulation such as probability distributions, time evolution snapshots, and phase space maps. All become interpretable through careful visualisation, and ggplot2’s grammar of graphics maps naturally onto the layered structure of these plots.

Simulation fences infinity into computable structure. Every choice in a classical quantum simulation from grid spacing, boundary condition, time step, to basis size is a decision about which piece of infinity to discard. The constraints are not regrettable compromises. They are what makes computation possible at all. A simulation without boundaries is not a simulation; it is an unsolvable problem wearing a different hat.

The classical approach is powerful, ingenious, and genuinely useful across a wide range of quantum systems. Researchers continue to push it further and continue to surprise quantum computing teams by matching their benchmarks with clever classical algorithms. That race is a sign of health, not failure. It sharpens the question of where quantum hardware actually earns its advantage.

But the exponential wall is real. For strongly entangled many-body systems, for large molecules, for the quantum materials that underlie next-generation technologies, classical simulation runs out of road. The constraints that make it work finite grids, finite bases, and finite precision, eventually conspire against it.

That’s all for now! If you like my efforts to make quantum science, computing and physical chemistry, more accessible to everyone; please consider recommending this newsletter on your own substack or website. Or share ExoArtDataPulse with a friend or colleague. Every recommend makes the project grow. Thanks for Reading!

Support our workstation fundraiser here :-)

These are three distinct strategies for computing how a quantum state evolves in time without directly exponentiating the full Hamiltonian matrix, which becomes prohibitively expensive for large systems.

Krylov subspace methods work by projecting the problem onto a much smaller space. Rather than acting with the full matrix, you build a compact subspace. The Krylov subspace by repeatedly applying H to the initial state vector: {v, Hv, H²v, H³v, ...}. The key insight is that time evolution tends to live mostly within this low-dimensional subspace, so you can solve a small problem there and get a good approximation to the full answer.

Padé approximations are a way of approximating a function using a ratio of two polynomials, rather than a single polynomial series. For the matrix exponential specifically, a rational approximation of the form P(H)/Q(H). That is, where P and Q are polynomials, converges much faster and behaves more stably than a truncated Taylor series. It is analogous to how 1/(1−x) is a better approximation to some functions than 1 + x + x² + ... truncated at a few terms.

Split-operator schemes exploit the structure of the Hamiltonian. In many physical systems H can be written as a sum of two parts i.e. typically kinetic energy T and potential energy V; which are each simple to exponentiate individually, even if e^(−iHt) as a whole is not. The Trotter–Suzuki decomposition lets you approximate e^(−i(T+V)t) ≈ e^(−iVt/2) e^(−iTt) e^(−iVt/2), with the error controllable by the step size. In practice this is often combined with a Fourier transform, since T is diagonal in momentum space and V is diagonal in position space.