Qudits today

Calling all dimensions!

In the previous post to this one, we introduced qudits as an advance on what can be done with qubits. However, there is an elephant in the room so to speak. And this is;

If qudits are better and if higher dimensional systems offer genuine advantages then what does that say about everything that has been built so far? Are the roadmaps being announced with such confidence by major technology companies already obsolete before they have been realised?

I’m guessing that acknowledging a richer way to think about quantum hardware might feel to some, like pulling at a thread that could unravel a carefully constructed narrative.

So before going any further, let’s address the issue directly because I believe such concerns are actually based on a misreading of what qudits represent.

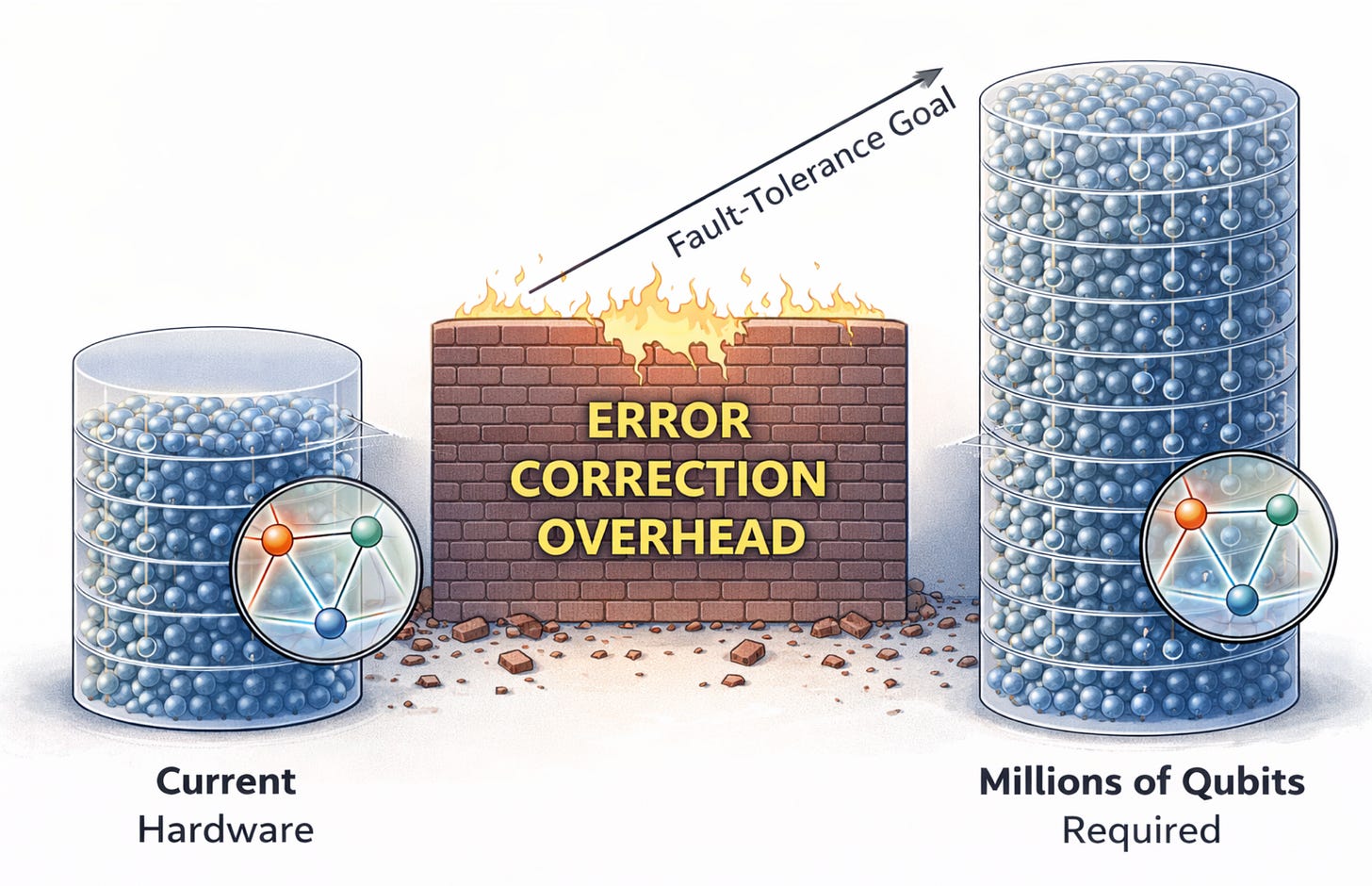

The dominant error correction codes, surface codes, are built around qubits and have been studied exhaustively. Real experimental results, however modest by the standards of what full fault tolerant quantum computing will eventually require, are already being produced on qubit hardware.

Indeed, error correction is the central problem of scalable quantum computing today. The overhead it imposes is the primary reason that building a fault tolerant quantum computer is so hard.

Estimates for the physical qubit counts required to run practically useful fault tolerant algorithms, once error correction overhead is factored in, run into the millions for some approaches. The gap between what current hardware delivers and what is needed is enormous, and a significant fraction of that gap is error correction overhead1.

Higher dimensional systems offer different error models, and in some regimes, error correction codes built around qudits can achieve more compact logical encodings or higher thresholds than their qubit equivalents.

Qudits look promising, because, If we can represent the same logical information in fewer physical carriers, or protect it with a lower overhead, the downstream consequences for hardware requirements are significant.

It’s not all plain sailing though. Qudits introduce more complex noise. More pathways exist for errors to occur when we are working with more energy levels, and characterising those pathways, let alone correcting them, is harder. Calibration becomes more demanding.

The theoretical apparatus required to design and analyse qudit based error correction codes is more involved than the equivalent qubit theory. Some of the intuitions that have been built up over years of working with two level systems simply do not transfer cleanly.

Leading groups in this space include the University of Oxford's Ion Trap Quantum Computing Group and companies like Quantinuum, whose hardware has been used in qudit gate research for applications such as simulating gauge theories in particle physics.

Meanwhile, superconducting platforms from IBM and Google Quantum AI Quantinuum also harbour higher energy states within their devices, states that exist whether or not engineers choose to exploit them.

On the photonic side, PsiQuantum is building large-scale quantum computers using single photons as qubits PsiQuantum, an approach that in principle supports high-dimensional encodings, while QuEra Computing specialises in neutral-atom quantum computing using Rydberg atom technology.

Each Rydberg atom has a whole ladder of different energy levels available, not just two. If you can control which rung of the ladder your atom sits on, you can use it to store more than one bit's worth of information and that's a qudit. Companies like QuEra are exploring how to harness this richness in a scalable way, using arrays of atoms held in place by focused laser beams called ‘optical tweezers’.

Spin-based approaches also enter the picture. QuTech at Delft University of Technology is a world-leading centre for semiconductor spin qubit research, and spin systems can in principle encode more than two levels.

To put all this in perspective, classical computing didn’t begin with bytes, or words, or vector registers. It began with bits single binary digits. I know, I was there2. The field then learned to group bits into bytes, and bytes into larger words, and words into the wide SIMD registers that modern processors use to parallelise operations across many values simultaneously.

None of these developments made the earlier layers obsolete. The byte did not kill the bit. The 256 bit vector register did not make bytes irrelevant3. Instead, each development stacked atop what came before, offering richer primitives that the layers above could exploit while the layers below remained intact. Furthermore, enthusiasts (and investors) didn’t pull out of the race as things changed along the way. Rather the reverse!

Qudits may play an analogous role in the quantum stack. Rather than replacing qubits, they may come to be understood as richer primitives that sit alongside them and natural choice for certain physical systems, valuable in certain circuit contexts, integrated into architectures that remain broadly qubit centric at the level where interfaces and programming models live.

As quantum systems scale up, the inefficiencies of working within an artificially constrained subspace become more visible and more costly. The unused capacity sitting in physical systems that could support higher dimensional operation starts to look less like a technicality and more like a missed opportunity.

The question was never really whether qudits matter. The more useful questions are around where they matter most. Under what engineering conditions, and how the transition to richer hardware primitives will be navigated are key concerns. Those questions are tractable, and the community is actively working on them.

That’s all for now! If you like my efforts to make quantum science, computing and physical chemistry, more accessible to everyone; please consider recommending this newsletter on your own substack or website. Or share ExoArt DataPulse with a friend or colleague. Every recommend makes the project grow. Thanks for Reading!

During my fact checking exercise with Claude Ai, an alternative to Surface Codes emerged out of the discussion. In May, we have a mini series on Error Correction upcoming, so more on all of this later. For now, here’s what Claude had to say.

“…

The dominant approach today is called the surface code. It’s popular because it only requires qubits to talk to their immediate neighbours — think of a neat grid, like a chessboard — which is relatively straightforward to build in hardware. The catch is that the surface code encodes only one logical qubit per code block, regardless of the code’s size, which leads to a large resource overhead for scalable quantum computing. Riverlane Current estimates suggest surface codes need roughly 100 to 1,000 physical qubits per logical qubit, potentially rising to several thousand for very demanding applications. The Quantum Insider

A newer family of codes called LDPC codes (Low-Density Parity Check) promises to do much better. The key idea is to allow qubits to interact with others that aren’t immediate neighbours — a more complex wiring arrangement, but one that pays off handsomely. IBM scientists found LDPC codes that feature a more than tenfold reduction in the number of physical qubits relative to the surface code, by encoding more information into the same number of physical qubits. (IBM) Some companies claim even larger gains: implementations using LDPC-style codes have demonstrated up to 20 times fewer physical qubits per logical qubit compared to surface codes. (IonQ) “

Check out Byte Magazine Issue #1 September 1975 This one carries various great articles on processors and chip choices at a time when personal computing really was a ‘Home Brew’ hobby. To think Steve Wozniak started out as a home brew guy with his 'Little Blue Box'. Feels familiar doesn’t it?

If you’re interested in seeing how far we’ve come check out Byte Magazine, August 1995 twenty years down the line from footnote 1. The ads are especially great. The advert for the Micron PC has this, “… Only the Micron Home MPC features 8MB of Micron RAM; a super¬ fast 4X EIDE CD-ROM drive; and full exploding stereo sound with the Sound Blaster 16, including speakers and a lightning-quick 14.4 Fax/Modem. And when you buy a Micron Home MPC, you’ll also receive Bob , Microsoft’s new user-friendly home interface. Microsoft Bob includes eight essential applications-including a calendar, letter writer, checkbook, and more that are linked together to make everyday computing tasks easy. With Bob around, you’ll really get things done! “

Thank goodness for Bob! :-))