Waves Part 3 Tools for Analysis

Analysing wave data in bulk using R Language and Knime

What a busy February it’s been! Good fun though, and the days are growing longer again, in this hemisphere at least, so more time for plug and play audio analysis, and electronic wave gathering too.

I mentioned in a recent post that once we get into analysis properly and especially when the Quantum Music Box (QMB)1 starts to come together; that we’ll need to work through some serious datasets, notably in radio astronomy. I also said that there were many such sets of data available to the public.2

However, before we start on that we need to recognise that some of these archives, and I mean mostly, are huge. No doubt our eventual observations will be describable in discrete formulae and associated processes. However, looking for that ‘needle in a stack of needles’ is going to require some bulk data processing I fear. As luck would have it help is near at hand.

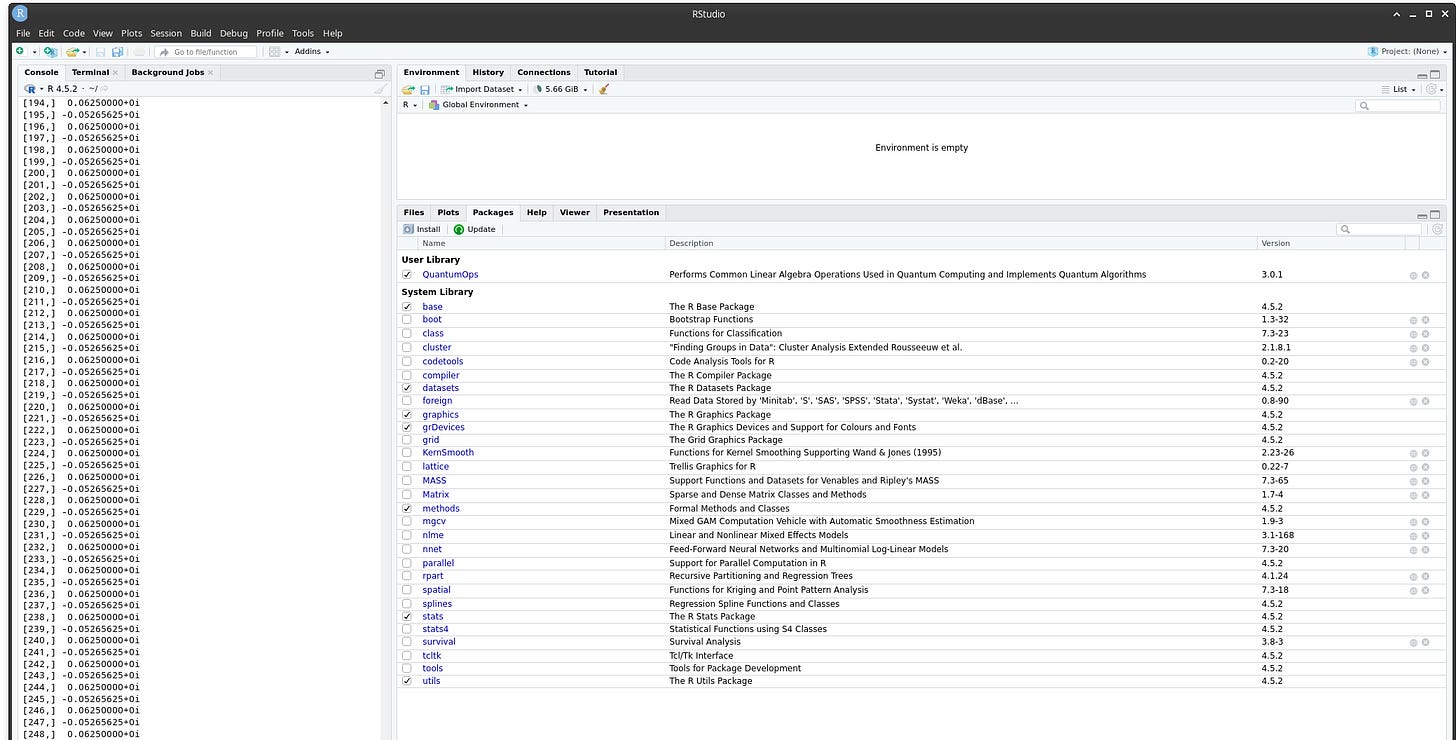

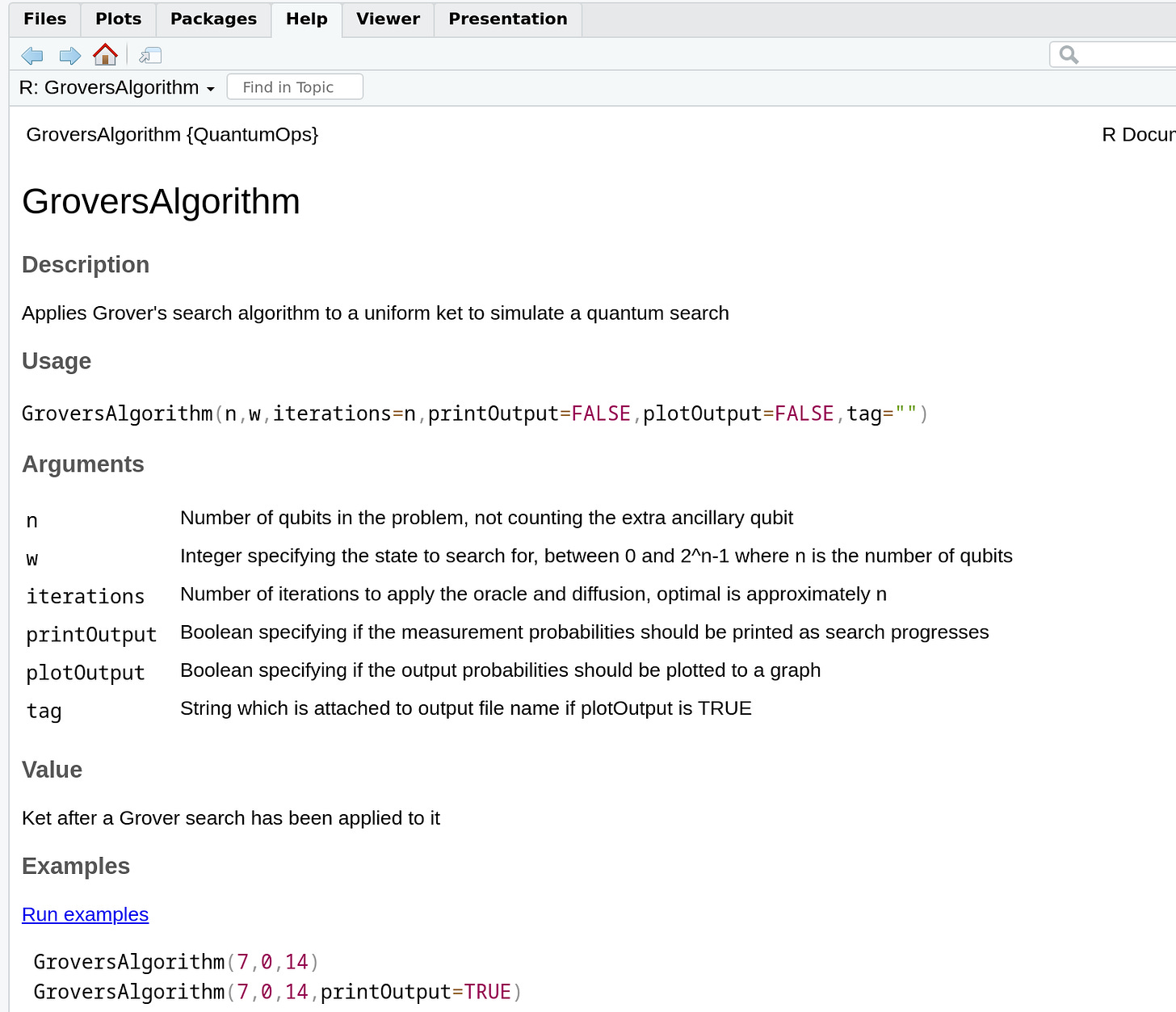

R Language can be used to process a number of Quantum functions. R Language is available for free and is used extensively in the scientific community in public and private research labs throughout the world. It is available for most computing platforms including windows, linux and macOS from the official R download service3

What you get is a simple user interface but access to literally hundreds of libraries covering every aspect of math, statistical and scientific analysis you might think of.

The library we immediately think of is is ‘QuantumOps’ but before we get to that. Let me mention ‘RStudio’. This is a thoroughly easy to download and use R Language Front End or IDE4.

I had to zoom in for readability purposes but you can see the flavour of the wide range of quantum functions available to us and we will be using R Language as required throughout our home brew work.

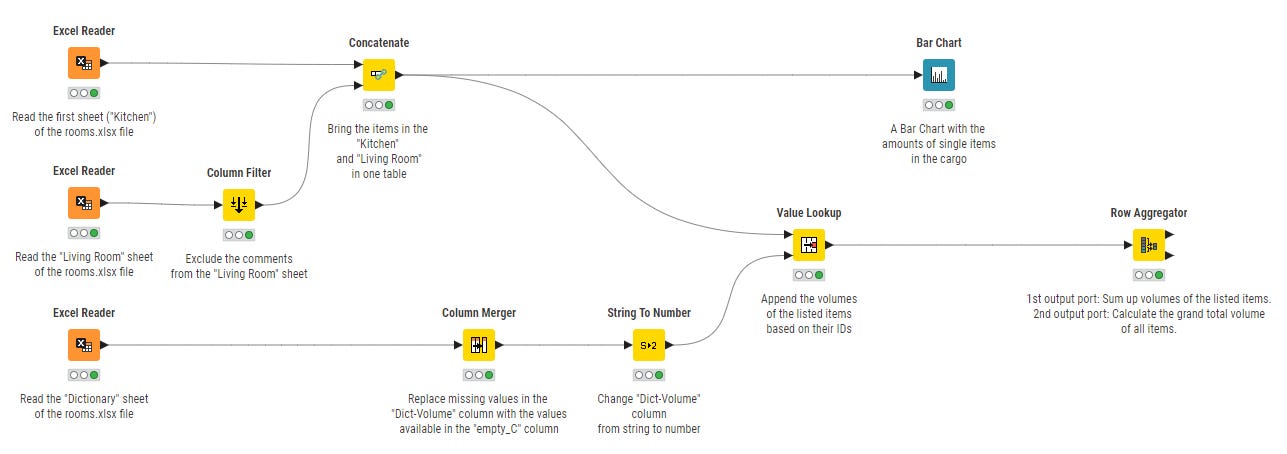

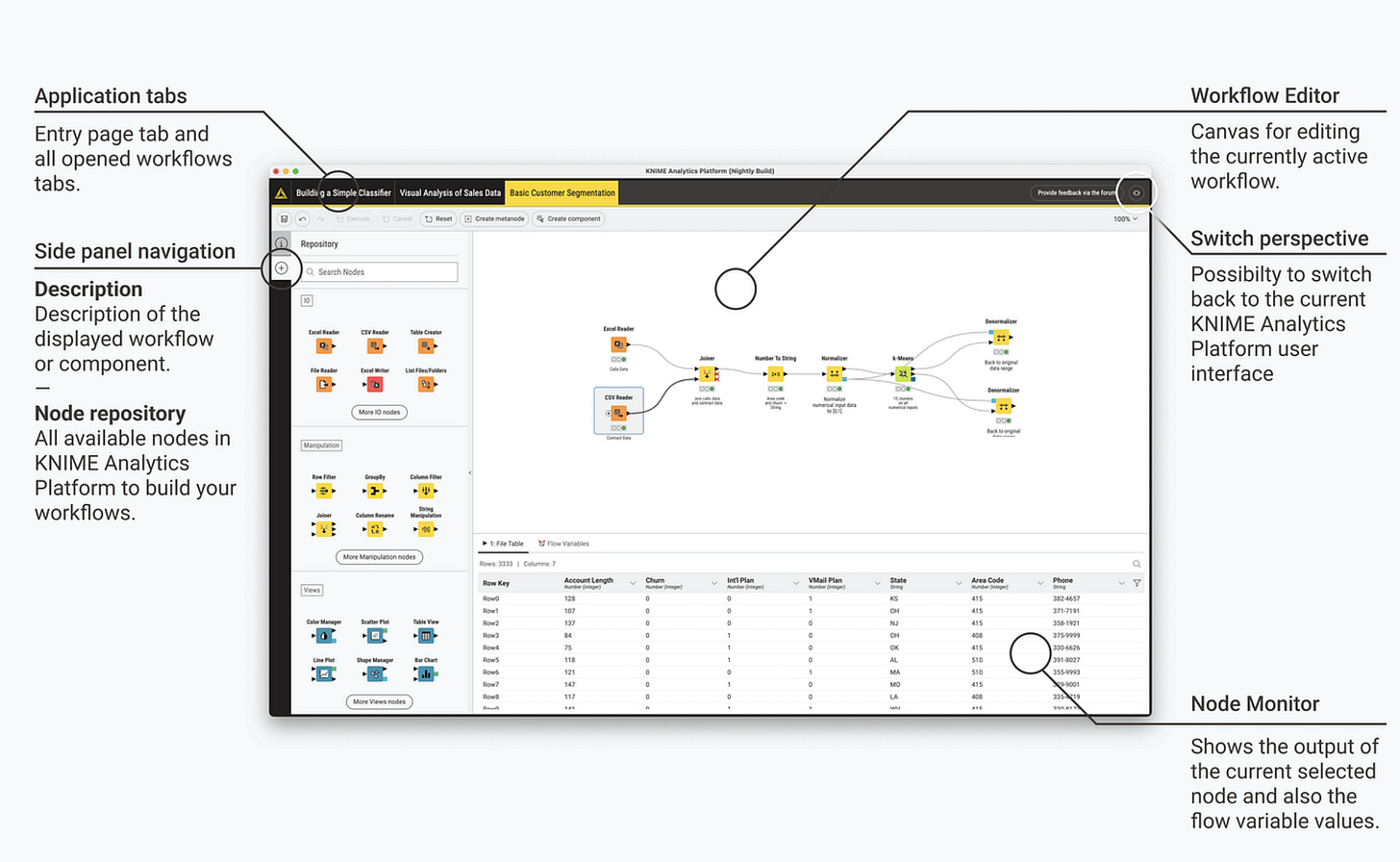

Previously, I’ve mentioned the bulk data processing of large publicly available datasets. For that we’ll need a bigger hammer. Which is where where Knime comes in. I say bulk, it could just be that in addition to volume, we also have a complex series of steps we want to apply to each piece of data we are examining. Knime excels at this. Again, free and available for Windows, Linux and mac os.

Anyone familiar with the IT process known as Extract Transform and Load (ETL) will know that often data needs pre-processing to get it into a form consistent with other data sets before ‘like for like’ analysis and reporting can take place.

ETL is most often used when moving large datasets from one database system, to another. Say your company is taken over by, or has bought out, another company and senior management want everyone to use the same CRM5 and have all their reporting across both companies to be consistent6.

It also happens within companies and frequently across groups, that you have two or more users of widely differing information systems. For instance, a small ‘boutique’ advertising consultancy uses MS Access db tech for its records because that was the tech adopted in its early days and the race for fame and fortune prevented anyone touching it.

However, having IPO’d while the founders were still young enough to enjoy their new wealth and cashed out. The new owners now have other, corporate fingers in the pie to consider and they use everything from Ms Office to SQLServer, MySQL database, DTS workflows, Azure cloud data silos and AWS data warehouses.

Meanwhile the aged hippy creatives in Marketing still use macs, Ms Office 365, Open Office and ‘iWork’. Even handwritten text (really, look at all those offline diaries, organisers and pocket books abounding in HR and Accounts7) and the beat goes on…

Before all this data can be analysed, and most importantly reported on, it needs to be massaged into a common format. What Knime brings to the feast is automated data processing workflow into which nodes handling preprocessing and transformation of data elements of all of this can be placed.

KNIME is particularly strong for us when we’ll be looking to share our results with the scientific community at large. Because to do that we’ll need repeatable, explainable analysis. In short, Knime is an easy to access visual tech, node-based analytics platform, that lets us build transparent, reproducible ETL data pipelines without massive or complex coding to get there8.

A strong selling point in my book9 is that we can develop our own internal data structures and standards for the rapid processing of the datasets we get our hands on and then ‘ETL’ it into whatever industry and research community formats are required. We could even consider creating open source repositories available to all when we want to make our findings publically accessible. My point is that with Knime and R language we have options (and did I say they’re both free?).

Clearly, there’s a lot to follow up here and I haven’t really touched on the AI tools we might throw into the mix to improve things, especially on the fact checking and reporting side of things. However, initially, I’d like us to be rock solid confident in what we’re doing by getting the ETL right first and building our analyses on that.

By the way, I’m hoping that you can start to see how realistic our ambitions are given modest investments in kit10.

Finally, What is missing from this post is the choice of a reporting tool, or tools (Knime has some), but I want to survey what’s out there first and see which are the best of breed and what Open Source strategies are available. My industry background is in Qlikview, and Ms Power BI (both of which I’m trained in and therefore pretty comfortable with) but these are quite expensive commercial packages and not really appropriate in terms of cost structure for a Home Brew QMB lab such as ours.

That’s all for now! If you like my efforts to make quantum science, computing and physical chemistry, more accessible to everyone; please consider recommending this newsletter on your own substack or website. Or share ExoArtDataPulse with a friend or colleague. Every recommend makes the project grow. Thanks for Reading!

See ‘Home Brew Quantum’, ExoArtDataPulse, January 9th, 2026.

For instance, National Radio Astronomy Data Archive

You can get RStudio for free, plus setup and configuration guidance from Posit They also have a cloud version and though though I’ve been an R user for a long time I’ve not actually not tried that yet.

Customer Relations Management (CRM) system. ‘Front Office to ‘Back Office’ systems that do everything from placing the order, troubleshooting customer issues with delivery, managing credit and collecting the cash. These can be huge such as SAP, and often originally based on a production management system, as is chief competitors such as Epicor, and Oracle.

CRM systems more closely tailored to customer service and the sales process and order-to-cash flow are systems such as Salesforce, Hubspot, Zoho CRM and Microsoft Dynamics 365. More here if you need them.

My point is you can see how huge and complex the data structures in one crm can be let alone between several. I once successfully completed the ETL from Epicor to SAP for a famous scottish based drinks distributor based in Stirling. It took about six months given the demand on key users to keep up their day jobs, me too, and handle the ETL outwith that. It was very satisfying to get a positive sign off from the source and target system stakeholders.

Didn’t save my job though. Out with the old and in with the new! TBH I was ready for a change by the time the chop came and the pay-off bought us one of the best family holidays ever!

Not an unreasonable desire considering the adage; ‘A man with a watch knows the time. A man with two watches? Never quite sure!’

On the basis that, ‘if it aint online it can’t get hacked!’ Crypto uses ‘paper wallets’ for the same reason. Also, senior execs, the ones that survive the longest, like to keep some things close to the heart. Literally, in some cases.

Having just said that, while still allowing Python and R when needed. We can also partition the workflows so depending on the use case, depends the workflows and ETL strategies we’ll employ.

I must write it one day :-))

More of this later. In late March we’ll be launching a fundraising campaign for workstation kit. I’d rather go the fundraising route so I can keep this blog free indefinitely.